Last updated: April 28, 2026

This is a practical guide to FORMLOVA's sales email detection feature. For the broader contact-form operating model, see the Contact Form Operations Guide. For the broader post-publish operations layer, see the MCP form service guide. For front-end defenses against contact form spam, see Contact Form Spam Guide. For the release note, see AI Now Detects Sales Emails in Your Forms. For the product thinking behind the feature, see Why We Built Sales Email Detection.

Sales pitches in a contact form do not just clutter an inbox.

They distort reports, trigger noisy Slack notifications, pollute exports, and make real inquiries harder to find.

FORMLOVA sales email detection solves this by labeling incoming responses as legitimate, sales, or suspicious. It does not silently delete submissions. It gives your team a safer way to separate real inquiries from sales noise.

This guide explains how to enable the feature, read the labels, correct mistakes, exclude sales emails from analytics and exports, and use the labels in workflows.

Quick Answer

Use sales email detection when your contact form receives unsolicited pitches and you need cleaner operations.

| Goal | Use |

|---|---|

| Identify sales pitches | Automatic response labels |

| Review uncertain responses | suspicious filter |

| Keep reports clean | Exclude sales from analytics |

| Export real inquiries | CSV / Excel export with sales excluded |

| Reduce noisy notifications | Workflow conditions |

| Fix wrong labels | Manual override in response detail |

The feature is designed as a labeling layer, not a blocking layer.

That matters. If an AI silently blocks a real inquiry by mistake, the lead is gone. If it labels something incorrectly, a human can correct it.

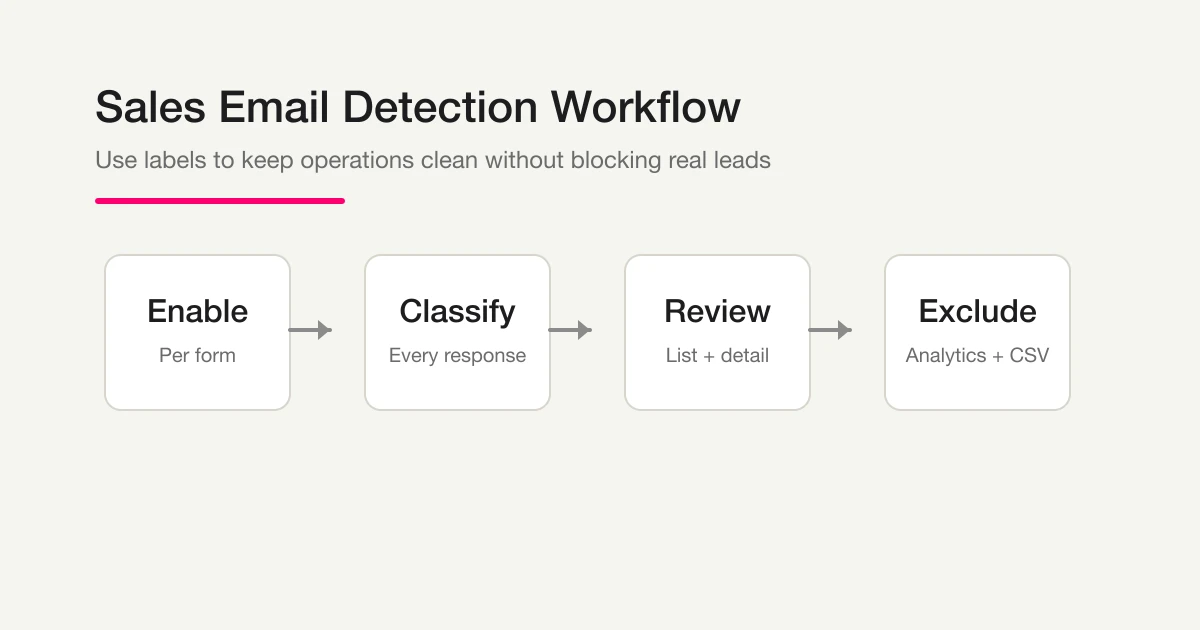

The Basic Workflow

The workflow has four steps.

- Enable detection on a form.

- New responses are classified automatically.

- Review labels in the response list or detail view.

- Exclude sales-labeled responses from analytics, exports, and workflows.

Only new responses are classified after detection is enabled. FORMLOVA does not automatically backfill old responses. That is intentional: existing database content should not be reprocessed without a clear user action.

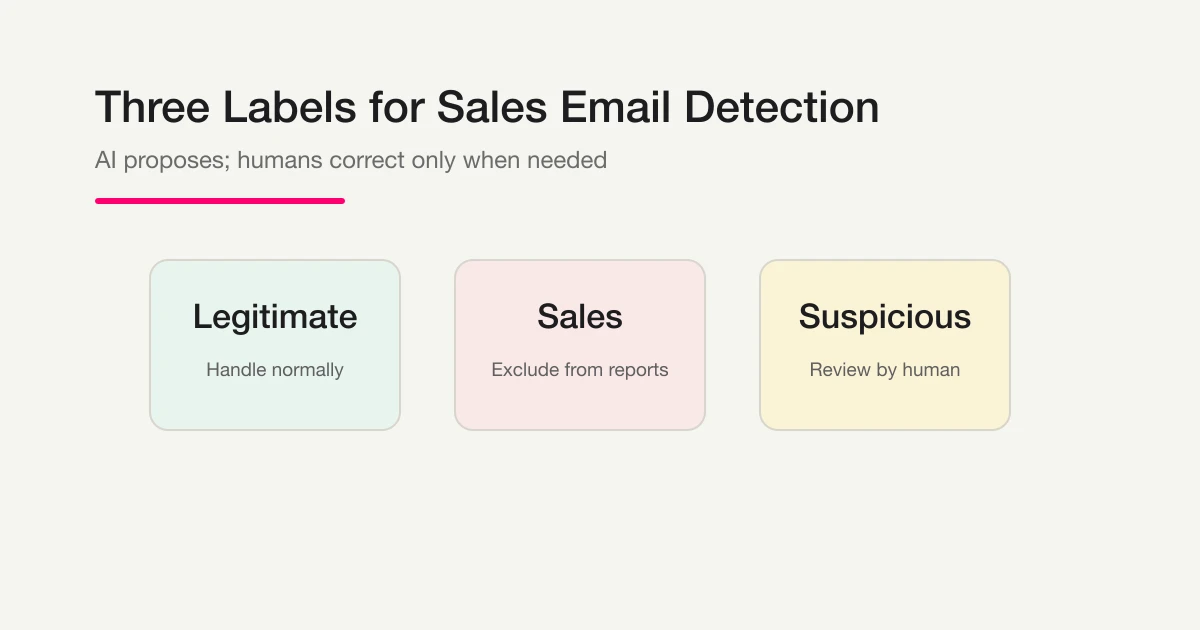

The Three Labels

FORMLOVA uses three labels.

| Label | Meaning | Recommended action |

|---|---|---|

legitimate | The response fits the form's purpose | Handle normally |

sales | The response is a sales pitch or unsolicited promotion | Exclude from reports or low-priority workflows |

suspicious | The response might be sales, but it is not safe to decide automatically | Review manually |

The important label is suspicious.

Business messages are often ambiguous. A partnership inquiry may be relevant. A reseller proposal may be noise. A recruiting pitch might be unwanted on a support form but valid on a hiring form.

FORMLOVA keeps this gray zone visible instead of pretending every response can be decided perfectly.

Label Examples

Use the form's purpose as the main test.

| Response | Label | Why |

|---|---|---|

| "I want to discuss pricing for your product." | legitimate | Product inquiry |

| "Can we book a demo next week?" | legitimate | Sales-qualified lead |

| "We provide SEO services and would like to help you." | sales | External service pitch |

| "We are a recruitment agency and can introduce candidates." | sales | Vendor outreach |

| "We want to discuss a potential partnership." | suspicious | Could be relevant or promotional |

| "Can we interview your team for a publication?" | suspicious | Needs context |

When in doubt, review manually.

Enable Sales Email Detection

Sales email detection is enabled per form.

For a new form, FORMLOVA asks about detection during the publish checklist when the form includes text inputs.

You can answer:

Enable sales email detection.

For an existing form:

Turn on sales email detection for the contact form.

You can also use the form settings screen in the dashboard.

Detection starts from future submissions. Old responses are not classified retroactively.

First-Week Checklist

For the first week, treat sales email detection as an operational setting, not just a toggle.

[ ] Enable detection on the right form

[ ] Add an anti-solicitation notice near the form

[ ] Review suspicious responses daily or weekly

[ ] Correct obvious false positives and false negatives

[ ] Decide whether marketing reports exclude sales by default

[ ] Decide whether Slack notifications should skip sales

[ ] Keep full exports available for audit or history

This prevents the most common failure: turning the feature on, but never deciding how the team should use the labels.

Small teams can start with a weekly review of suspicious responses. Larger teams may want daily review during the first two weeks, then relax once the pattern is clear.

The point is to make the labels part of a repeatable workflow. Decide who owns review, where clean numbers are used, and which downstream tools should ignore sales-labeled responses.

Write that rule down before volume increases.

Which Forms Should Use It

Sales email detection is most useful on forms where people can write free text.

| Form type | Fit |

|---|---|

| Contact form | High |

| Resource request form | High |

| Job application form | Medium |

| Webinar registration form | Medium |

| Selection-only survey | Low |

| Paid event form | Usually skipped |

Selection-only forms give senders little room to write a pitch. Paid event forms are also unlikely to attract sales pitches because the sender would have to go through payment-related steps.

Review Labels in the Dashboard

The response list shows labels for sales and suspicious responses.

Legitimate responses are intentionally less noisy. The dashboard should draw attention to the rows that need review, not decorate every normal response.

Check three fields:

| Field | Meaning |

|---|---|

| Label | legitimate, sales, or suspicious |

| Score | Confidence indicator for non-legitimate labels |

| Source | auto for AI classification, manual for human correction |

The best daily habit is to review suspicious first. Sales-labeled rows can often be deprioritized, but suspicious rows may contain real inquiries.

Correct Mistakes Manually

AI classification is not the final authority.

Open a response detail view and change the label when needed. Manual corrections are stored as manual labels and are not overwritten by automatic classification.

Correct the label when:

- A real customer inquiry was marked as sales.

- A vendor pitch was marked legitimate.

- A partnership inquiry needs human review.

- A hiring or agency message does not fit the form's purpose.

Do not try to review every row forever. The point is to reduce work. Start by checking suspicious rows and obvious mistakes.

Exclude Sales Emails from Analytics

This is where the feature changes reporting.

If your form received ten submissions and eight were sales pitches, the real inquiry count is two, not ten. Reporting on the raw number distorts conversion rate, cost per inquiry, and channel quality.

Ask FORMLOVA:

Show this month's contact form analytics, excluding sales emails.

Or:

Calculate last week's CVR without sales emails.

The system excludes responses labeled sales. It keeps suspicious responses visible because uncertain responses may still be real.

Use two numbers in reports:

| Metric | Meaning |

|---|---|

| Total responses | Everything submitted |

| Responses excluding sales | The operationally meaningful number |

The second number is usually the one you want for marketing and sales reporting.

Exclude Sales Emails from CSV and Excel

Exports can include or exclude sales emails depending on the use case.

For a clean response list:

Export the contact form responses as CSV, excluding sales emails.

For audit or full history:

Export all responses, including sales emails.

Recommended split:

| Use case | Export |

|---|---|

| Customer follow-up | Exclude sales |

| Ad performance report | Exclude sales |

| Internal audit | Include all |

| Classification review | Export sales and suspicious rows |

The goal is not to erase data. The goal is to choose the right dataset for the job.

Use Labels in Workflows

Labels can drive workflow conditions.

For Slack:

Notify Slack when the contact form receives a response, but skip sales emails.

For CRM routing:

Add legitimate contact form responses to HubSpot, excluding sales emails.

Start carefully. Do not immediately send harsh automatic replies to sales-labeled messages. A false positive could create a poor experience for a real prospect. First use labels for filtering, analytics, and internal routing. Move into automated replies only after you trust the pattern.

Troubleshooting

Some responses have no label

The response may have arrived before detection was enabled. The form may not have text fields. The form may be a paid event flow. Or classification may have timed out. In those cases, the response is still stored; FORMLOVA does not break submission because classification failed.

Sales pitches are labeled legitimate

FORMLOVA leans toward legitimate when uncertain. This avoids hiding real inquiries. Correct the label manually when a sales pitch gets through.

Real inquiries are labeled sales

Correct them immediately. Partnership, agency, recruiting, and vendor-related messages often require context. Use suspicious when the answer should be reviewed by a person.

The score is high but wrong

The score is confidence, not truth. Use it to prioritize review, not to replace judgment.

Related Workflows You Can Use

When a response is a real lead, Response to HubSpot Contact is the strongest Workflow Place route to add. It keeps sales-worthy submissions from sitting beside obvious sales pitches in the same response table.

For deeper sales handling, combine it with Lead-score Routing, Pricing Inquiry Follow-up, or Demo Request Handoff. The point is not only to detect noise, but to move valid demand to the right follow-up path.

Summary

Sales email detection keeps contact form operations cleaner without silently deleting responses.

Enable it on forms that receive free-text responses. Review suspicious rows. Correct mistakes. Exclude sales-labeled responses from analytics, exports, and noisy workflows.

Start with:

Turn on sales email detection for the contact form.

Then use labels to keep real inquiries visible.

Related Articles

- Contact Form Spam Guide

- Contact Form Operations Guide

- MCP Form Service Guide

- AI Now Detects Sales Emails in Your Forms

- Why We Built Sales Email Detection

- Export Responses to CSV or Sync Them to Google Sheets

Disclosure and Verification

This article is part of the FORMLOVA product blog. The author is the developer of FORMLOVA. Product facts, pricing, limits, and comparison claims should be checked against the current FORMLOVA spec, plan definitions, and relevant primary sources before publication or major updates. For privacy, hiring, legal, medical, or financial workflows, follow your organization's policies and specialist review.